What is big data? Big data is used to regulate and extract the hidden knowledge and values from data and that data has some analytics, techniques, scale, complexities, diversities, and novel structural design. The following is used to describe the programs which are used for the big data analytics implementation.

What is the spark?

The spark is the apache based project that is stimulated by the lighting and fast cluster computing process. It offers several data processing platforms with speed processes and it is used for program functions that are 10x faster on the disk and 100x faster in memory than Hadoop.

What is spark used for?

The wide-ranging determination of the distributed data processing engine is called spark, which is apt for functions with a wide range of circumstances and the libraries for SQL, graph computation, stream processing, and machine learning are utilized for all the applications.

Is spark written in Java?

Java and Scala are the two significant languages used to write Spark and the functions of Scala and JVM are used to accumulate the codes which are written in Scala. In addition, spark assist in the programming languages such as

- Scala

- Pig

- Hive

Scala is considered one of the significant programming languages which are used for the production of spark applications.

What is GitHub?

GitHub is a cloud and website-based service that the provisions assistance for the developers to store and manage the code and to track and manage the modifications to the code. Git is denoted as the distributed version control system and it is the complete codebase and history. That applies to all computer developers and that can easily permit the merging and branching process.

Spark main components

There are five significant components used in the apache spark and they are highlighted below for big data analytics implementation project..

- GraphX

- The collection of APIs is deployed to facilitate the functions of graph analytics

- Machine learning library

- Machine learning algorithms are filled in the machine learning library and the main intention machine learning library is scalability and accessibility

- Spark Streaming

- The components are used to permit the process of the live data streaming and the data is capable to originate the different sources such as

- Flume

- Kinesis

- Kafka

- The components are used to permit the process of the live data streaming and the data is capable to originate the different sources such as

- Spark SQL

- It is used to gather data that is related to the structured data and the process of data

- Apache spark core

- Spark core is accountable for the required functions for the process such as task dispatching, fault recovery, scheduling, input and output operations

A spark from multiple angles – Categorization

- Scheduling and resource management

- The tools are used for monitoring, scheduling, and resource allocation

- Machine learning

- It is used for machine learning library computations

- Memory processing provides the speed process

- Ease of use and language support

- It is a user-friendly process and it permits the interactive shell mode

- APIs are written in the programming languages such as

- Spark SQL

- Java

- R

- Python

- Scala

- Security

- It is not secure and the security process is turned off as the default process. It is fully dependent on the integration with Hadoop the achieve the essential security level

- Scalability

- It is complicated to scale the process due to the dependency of Ram on the computations and it provides support to thousands of nodes in the cluster

- Fault tolerance

- It is used to track the RDD block creation process to rebuild the datasets along with the partition fails. Spark is utilizing the DAG for the data rebuilding through nodes

- Data processing

- The live stream data analysis and iterative with apt data processing and the functions of RDDs and DAGs are functioning for the operations

- Performance

- Memory performance is considered the fastest task to reduce the disk writing and reading operations

We have discussed all the significant angles based on the spark. The functions of spark are used to separate several use cases such as big data analytics implementation

Use cases of spark

- Complete machine learning applications

- The data is modeled through graph parallel processing

- It is used to analyze the real-time stream data

- Iterative algorithms are deployed to regulate the parallel chain operations

- It is essential to time to spark for quick results with the memory computations

The above-mentioned are significant use cases in spark. In addition, the process of apache spark is highlighted in the following,

How to run the apache spark application on a cluster?

- The user applies to the spark-submit process

- The driver program is promoted in the spark-submit and through the rise of the main method through the user specifications

- Driver program examines the resources of the cluster manager and it is essential to launch the executors

- In the place of the driver program, the cluster manager presents the executors

- The driver process to run the user application with assistance and which is related to the transformation and action with RDDs and the driver used sends the task to the executors based on the various format of tasks

- The cluster manager deploys to send the results to the driver through tasks based on the executor’s process

How to launch a program in spark?

- Spark submit is a program which is functioning through spark and cluster manager with the capability of single script submit the process

- The applications are launched on the cluster for several options with the utilization of spark-submit and to accumulate the cluster manager and regulate the resource cluster applications

- The spark-submit and cluster managers are functioning to run the driver in the cluster and others are used for local machines

Spark in MapReduce

- It is connected with standalone deployment, for the functions such as launching the spark tasks in MapReduce

- The users are capable to experiment with spark utilizing the shells after downloading and it leads to playing the spark for every virtually

Interoperability with other systems

- Apache Mesos

- The isolation resources are offered through the cluster manager through the distributed applications such as Hadoop and MPI

- It is used to permit fine-grained sharing and the spark job is allowed for various advantages for the idle resources in the execution process of the cluster

- It provides extensive developments for the functions of spark jobs

- Apache hive

- Apache hive users are permitted through the spark for the functions of unmodified queries

- Hive is considered the finest data warehouse solution and that is functioning on top of Hadoop and that shark system is used to permit the framework of the hive

- In addition, the shark is used to accelerate the hive queries with the variations in input data and disk storage

- AWS EC2

- Spark is easily functional in with Amazon’s EC2 with the deployment of scripts and the versions which are hosted in Amazon’s elastic MapReduce

We hope you have a basic idea about the big data analytics implementation. If you have any doubts regarding your implementation process, then interact with us for getting more research suggestions for the execution. We are always ready to serve you the complete your research work starting from novel big data analysis topics, so reach us to aid more.

Subscribe Our Youtube Channel

You can Watch all Subjects Matlab & Simulink latest Innovative Project Results

Our services

We want to support Uncompromise Matlab service for all your Requirements Our Reseachers and Technical team keep update the technology for all subjects ,We assure We Meet out Your Needs.

Our Services

- Matlab Research Paper Help

- Matlab assignment help

- Matlab Project Help

- Matlab Homework Help

- Simulink assignment help

- Simulink Project Help

- Simulink Homework Help

- Matlab Research Paper Help

- NS3 Research Paper Help

- Omnet++ Research Paper Help

Our Benefits

- Customised Matlab Assignments

- Global Assignment Knowledge

- Best Assignment Writers

- Certified Matlab Trainers

- Experienced Matlab Developers

- Over 400k+ Satisfied Students

- Ontime support

- Best Price Guarantee

- Plagiarism Free Work

- Correct Citations

Expert Matlab services just 1-click

Delivery Materials

Unlimited support we offer you

For better understanding purpose we provide following Materials for all Kind of Research & Assignment & Homework service.

Programs

Programs Designs

Designs Simulations

Simulations Results

Results Graphs

Graphs Result snapshot

Result snapshot Video Tutorial

Video Tutorial Instructions Profile

Instructions Profile  Sofware Install Guide

Sofware Install Guide Execution Guidance

Execution Guidance  Explanations

Explanations Implement Plan

Implement Plan

Matlab Projects

Matlab projects innovators has laid our steps in all dimension related to math works.Our concern support matlab projects for more than 10 years.Many Research scholars are benefited by our matlab projects service.We are trusted institution who supplies matlab projects for many universities and colleges.

Reasons to choose Matlab Projects .org???

Our Service are widely utilized by Research centers.More than 5000+ Projects & Thesis has been provided by us to Students & Research Scholars. All current mathworks software versions are being updated by us.

Our concern has provided the required solution for all the above mention technical problems required by clients with best Customer Support.

- Novel Idea

- Ontime Delivery

- Best Prices

- Unique Work

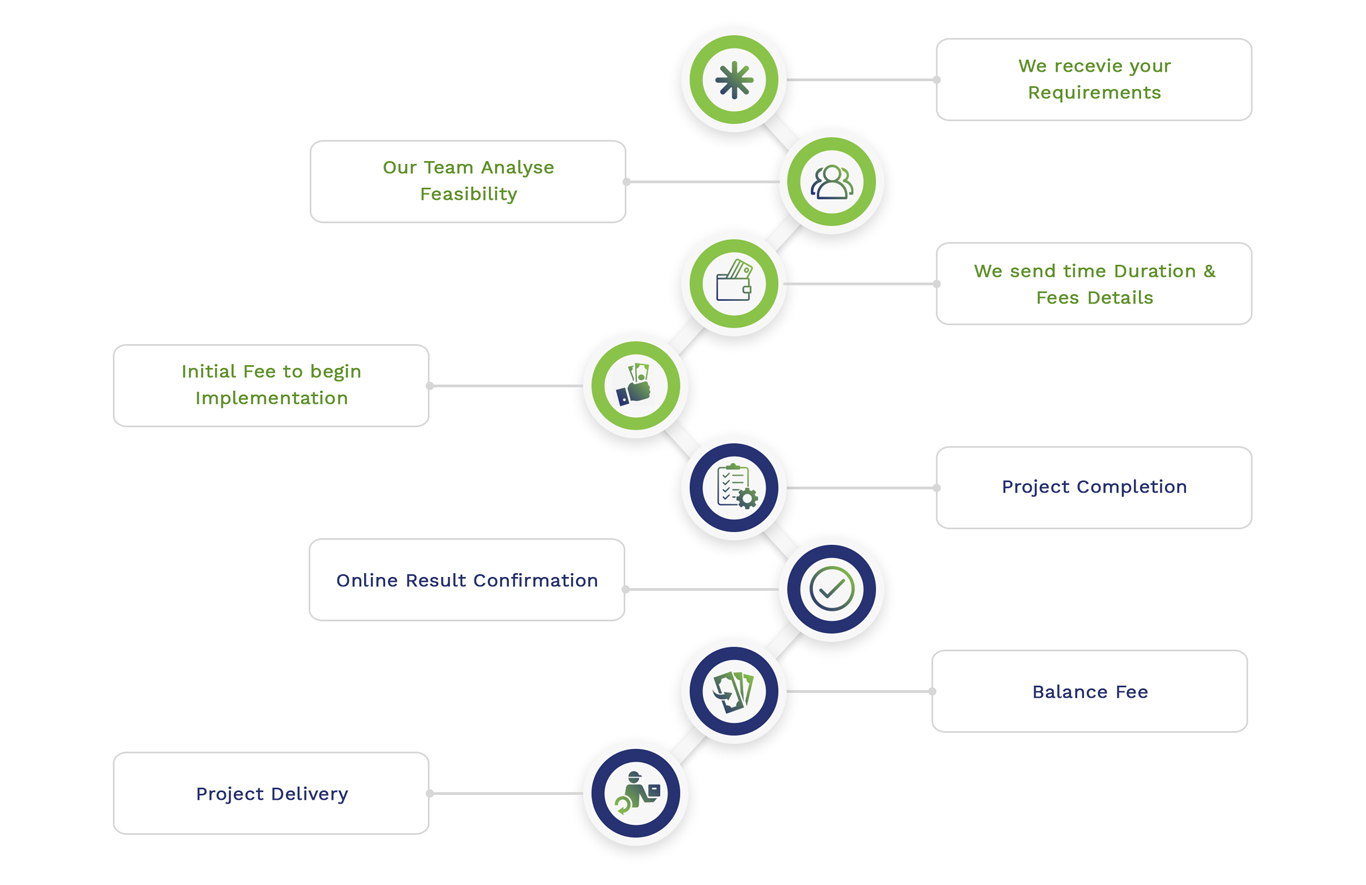

Simulation Projects Workflow

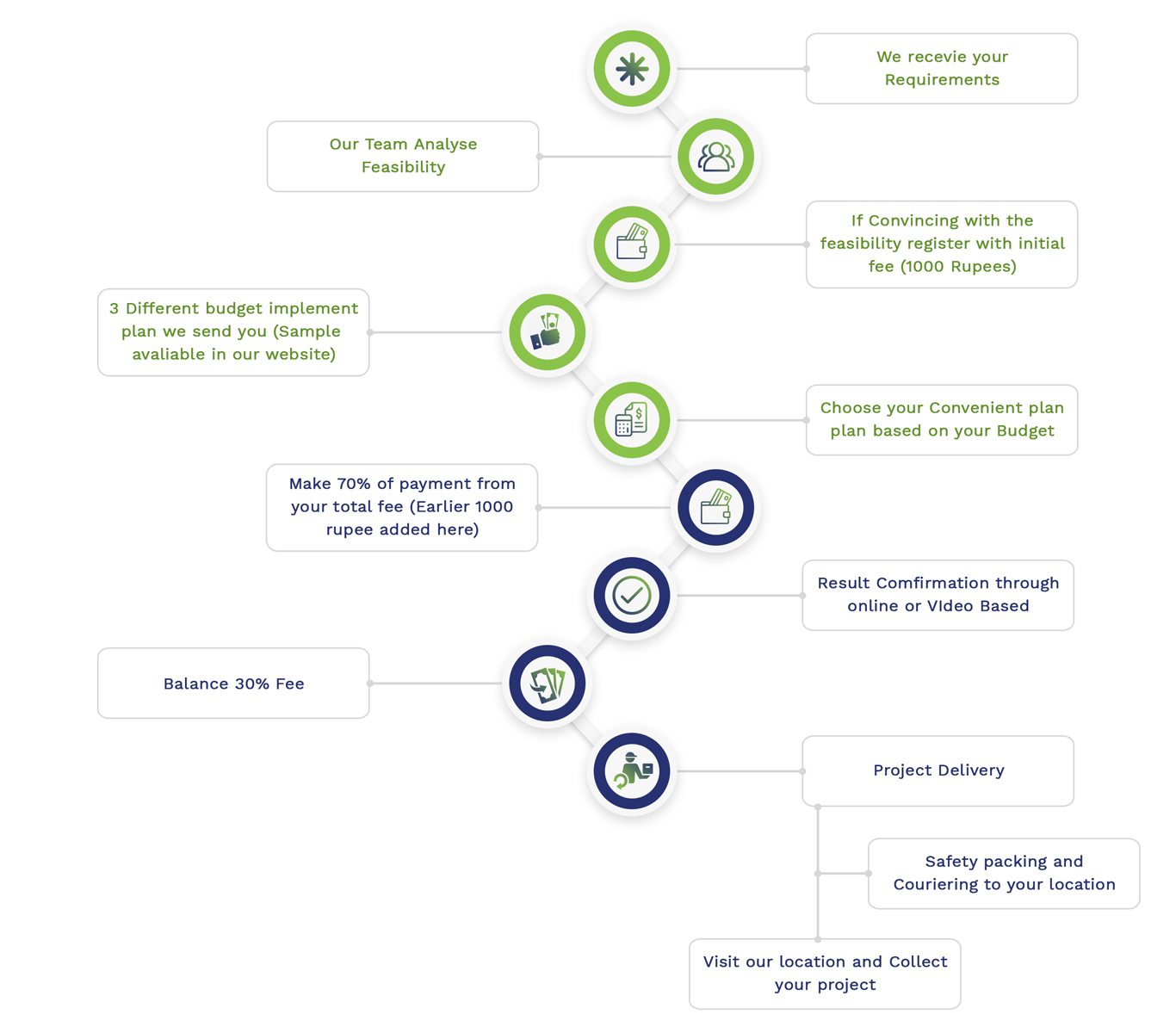

Embedded Projects Workflow

Matlab

Matlab Simulink

Simulink NS3

NS3 OMNET++

OMNET++ COOJA

COOJA CONTIKI OS

CONTIKI OS NS2

NS2