Big data deals with 3Vs such as variety, volume, and velocity, and in addition, it cannot be handled through the normal and fundamental database management system. Big data requires novel technological methods to be used for the process of management and these are essential to assist the big data. To this end, we say that we have full focus on big data Hadoop projects. The research ideas in Hadoop are not limited to this page.

Apache Hadoop is the open source software that is used for the functions such as distributed computing, scalability, reliability, etc. The software library in apache Hadoop is the significant outline that permits the distributed processing of massive datasets through the programming models in the clusters of computers.

What is the use of Hadoop big data?

Hadoop is the Java-based framework and the open source network deployed for big data processing and storing. The clusters are functioning through the data stored in servers based on inexpensive commodities. The fault tolerance and synchronized processing are permitted through the distributed file system.

Where is Hadoop used?

In general, Hadoop is deployed in the big data applications such as

- Transaction data

- Clickstream data

- Social media data

Is big data and Hadoop being same?

Big data is deliberated as an asset and that is more treasured although Hadoop is considered as a program for the functions to try out the value of assets and this shows the great difference between big data and Hadoop.

Is Hadoop only for big data?

Hadoop is not considered as the spare section in the structural design of the data processing system, instead of that Hadoop can work along with that processing. Hadoop distributed file system is used to store data for the function with its process and transformed into structured controllable data.

Does Hadoop require coding?

Hadoop is open source software and java encoded outline meant for the processing of a large amount of data and distributed storage in addition there is no necessity for coding. The researchers have to learn the Hadoop certification course to implement big data Hadoop projects and study the hive and pig which are used for fundamentals in SQL.

Is Hadoop a NoSQL?

In general, Hadoop is the software ecosystem which used to permit parallel computing in massive amounts and it is not considered the type of database. The distributed databases in NoSQL are functional through Hadoop and that permits the data to spread over thousands of servers with a performance reduction.

Enterprise data warehouse (EDW)

The apache related projects in Hadoop are captivating through the enterprise data warehouse (EDW) architecture in the fast and slow path.

- Fast path

- GUI visualization CRM

- BI- rules

- Pregel graph

- CEP and DSMS

- Impala

- HOP

- Drill

- Dremel

- ODS

- HBase

- Slow path

- Data mining

- Mahout

- Data W/H

- Hive

- Database

- Spanner

- HBase

- ETL

- Flume

- HDFS-MR

- Sqooq

Big data Hadoop deployment

Initially, the big data solutions for the requirements and business objectives are transcribed and learned. Then the insights of big data hadoop projects have to be defined and the researcher has to search for numerous tools and structural designs for the big data functions after this process check the comparison to decide the appropriate requirements for the research. The storage, ingestion policy, and the process essentials of data are deliberated for the big data deployment. The researcher can go for both automatic and manual deployment of the solution.

What kind of value addition does big data offer?

Big data includes the insights, patterns, and trends which are hidden within it and it can transform business insights. In addition, these are supportive of the business formulations with the latest business techniques.

- The process will throw out from the market when the potential of big data is not connected

- Significantly, the big data functions will enhance the revenue and decrease the cost and efforts in the marketing

- Big data is useful in the market and the customer’s understanding and in addition the implementation and renovation will be developed

- It functions in the reduction of cost and enhances the efficiency

Why is Hadoop needed?

Hadoop can create a single machine and the cluster of machines shows the real power of Hadoop it topped from the single machine to thousands of nodes such as the MapReduce and Hadoop distributed file system. Hadoop professionally processes large volumes of data on the clusters of commodity hardware.

What is Hadoop API?

The nodes, clusters, historical information applications, and applications are functional through the URI resources based on the Hadoop YARN web service REST APIs. The resources based on the URI are gathered as APIs and that is related to the returned types of information.

How does Hadoop work?

The functions of data storage and processing take place in Hadoop with the methods of distribution over the clusters of commodity hardware. The clients have to submit the program and data to the Hadoop cluster for the functions of process and store. The functions such as

- Firstly, HDFS in Hadoop used to store the data

- Then, the MapReduce is used to process the stored data in HDFS

- Finally, the task distribution and resources are assigned through YARN

What is scalability in Hadoop?

The scalability is the fundamental advantage of Hadoop and the clients scale the clusters by the addition of extra nodes. Horizontal and vertical are the types of scalabilities in big data Hadoop projects. Scale-up is used to denote vertical scalability.

When to use Hadoop?

- Parallel data processing

- y processing big data

- Storing various datasets

Latest research topics for Big data Hadoop projects

- Hadoop MapReduce in mobile clouds

- Hadoop MapReduce and spark technology with the big data management processing

- Big data analysis using the Hadoop framework and clusters

- Data analysis using Hadoop MapReduce environment

Who takes care of replication consistency in a Hadoop cluster and what do under/ over replicated blocks mean?

The consistency of replication is responsibly taken care of by the NameNode in the cluster. The information is passed through task command and that is based on the under and over the replicated block.

- Over replicated blocks

- It is exceeding the stage of blocks from the target replication of the files

- HDFS is used to delete the extra files, so it won’t create any issues

- Under replicated blocks

- It is known for the below target replication of the files

- HDFS is used to produce new replicas to meet the target replication

You can contact us for more big data Hadoop projects. As well, we support you in developing your ideas too using our experience. Our research professionals assist you to obtain the best results using new algorithms, latest big data essay topics and other techniques. In addition, we are ready to suggest novel techniques and methodologies for your research.

Subscribe Our Youtube Channel

You can Watch all Subjects Matlab & Simulink latest Innovative Project Results

Our services

We want to support Uncompromise Matlab service for all your Requirements Our Reseachers and Technical team keep update the technology for all subjects ,We assure We Meet out Your Needs.

Our Services

- Matlab Research Paper Help

- Matlab assignment help

- Matlab Project Help

- Matlab Homework Help

- Simulink assignment help

- Simulink Project Help

- Simulink Homework Help

- Matlab Research Paper Help

- NS3 Research Paper Help

- Omnet++ Research Paper Help

Our Benefits

- Customised Matlab Assignments

- Global Assignment Knowledge

- Best Assignment Writers

- Certified Matlab Trainers

- Experienced Matlab Developers

- Over 400k+ Satisfied Students

- Ontime support

- Best Price Guarantee

- Plagiarism Free Work

- Correct Citations

Expert Matlab services just 1-click

Delivery Materials

Unlimited support we offer you

For better understanding purpose we provide following Materials for all Kind of Research & Assignment & Homework service.

Programs

Programs Designs

Designs Simulations

Simulations Results

Results Graphs

Graphs Result snapshot

Result snapshot Video Tutorial

Video Tutorial Instructions Profile

Instructions Profile  Sofware Install Guide

Sofware Install Guide Execution Guidance

Execution Guidance  Explanations

Explanations Implement Plan

Implement Plan

Matlab Projects

Matlab projects innovators has laid our steps in all dimension related to math works.Our concern support matlab projects for more than 10 years.Many Research scholars are benefited by our matlab projects service.We are trusted institution who supplies matlab projects for many universities and colleges.

Reasons to choose Matlab Projects .org???

Our Service are widely utilized by Research centers.More than 5000+ Projects & Thesis has been provided by us to Students & Research Scholars. All current mathworks software versions are being updated by us.

Our concern has provided the required solution for all the above mention technical problems required by clients with best Customer Support.

- Novel Idea

- Ontime Delivery

- Best Prices

- Unique Work

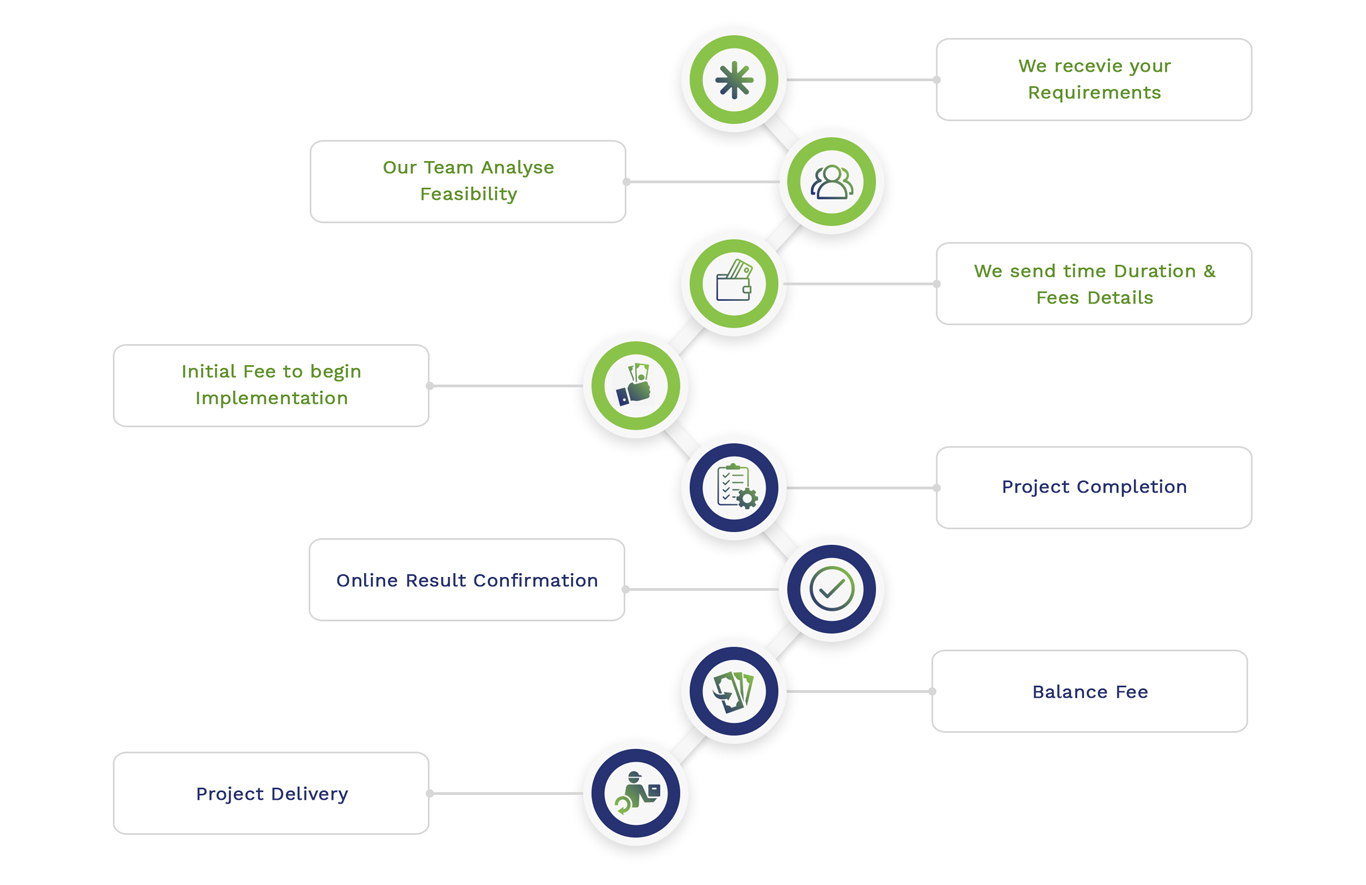

Simulation Projects Workflow

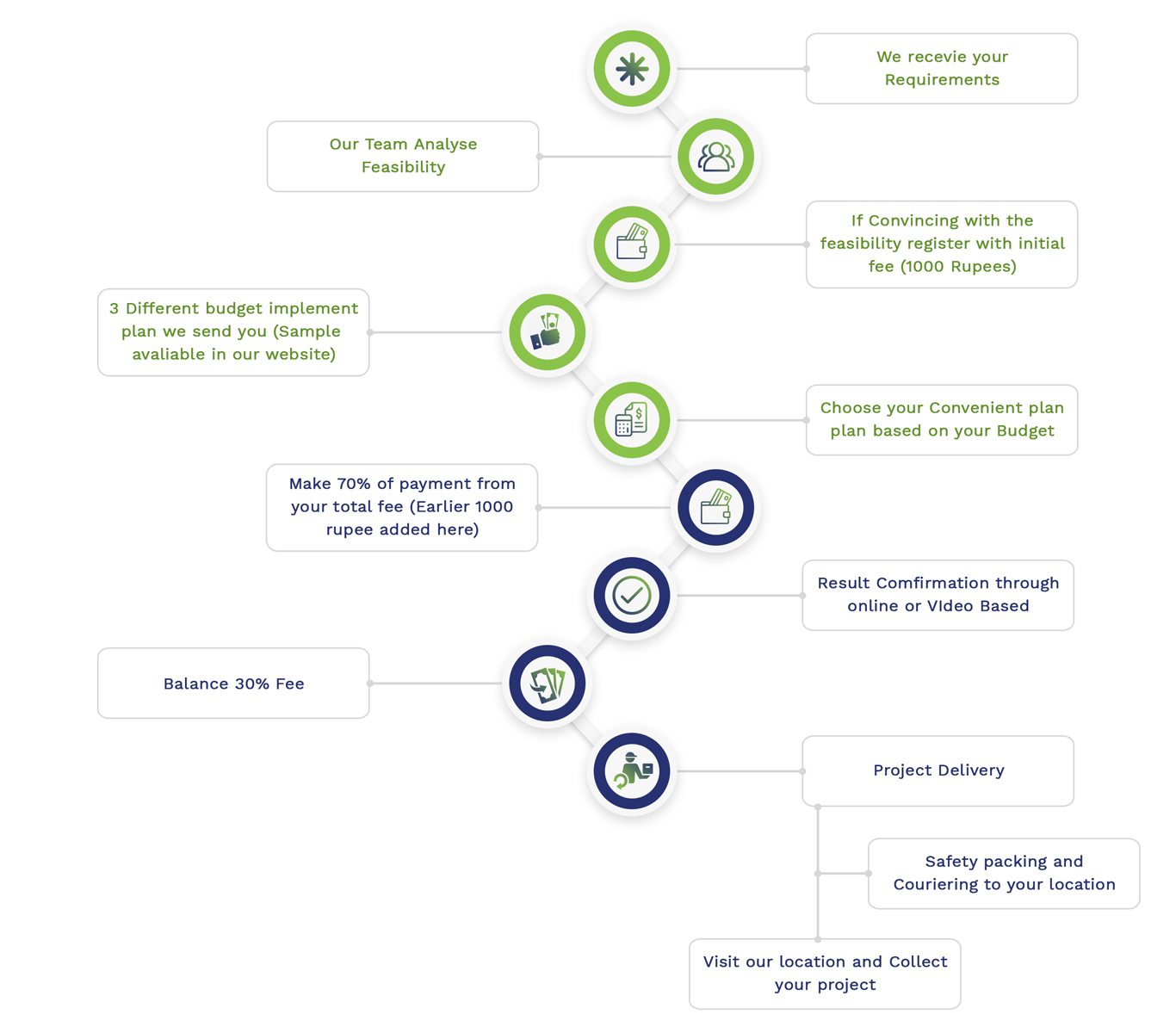

Embedded Projects Workflow

Matlab

Matlab Simulink

Simulink NS3

NS3 OMNET++

OMNET++ COOJA

COOJA CONTIKI OS

CONTIKI OS NS2

NS2